Assigned Mail At Birth

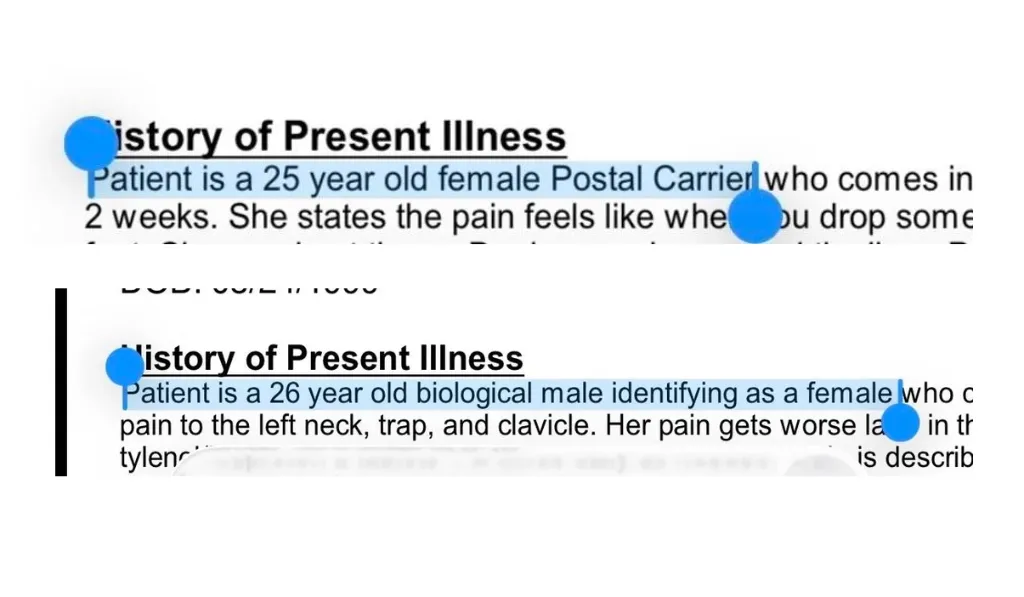

A mailwoman in the US was receiving orthopedic treatment for issues relating to the physical demands of her job. There's a lot of lifting, carrying, and walking involved in delivering mail, which can lead to pain and inflammation. One day, when checking her medical documentation, she noticed the orthopedist had suddenly and incorrectly reregistered her as a transgender person.

Respondents were quick to point out that AI usage was the likely root cause. At some point an AI transcription service has mistranscribed "mail" as "male". And since the point of using an AI transcription service in the first place is that slop is quicker and cheaper than medical records created by a qualified medical professional, the mistranscription became a documented medical "fact".

Further replies suggest that it's far from an isolated incident.

My doc has me listed as having 2 children. I have 2 cats. Pretty sure it's the ai thing they use to take notes.

Yeah, I have nurse friends who have been forced to use shitty AI tools for notes with disastrous results, and this could be one of those mistakes.

you need to write/document the issue, why it's incorrect, and request the doctor (or charting medical provider) to make the correction. I had to do it last year for something similar to OP where it was a huge, and extremely concerning, error and I actually switched providers over it due to the implications.

Given this particular mistake (mine was more abuse/neglect oriented for my child and was an AI hallucination from their AI charting program) and the current political climate I would absolutely blast this provider and find the root issue and potentially report it all the way up.

If your doctor uses AI that could be it… I recently went to a new doctor who uses AI to transcribe and I told him I was engaged. I look at the transcription and it says me: “I am gay” doctor:“you are gay?” Me: “Yeah” 😂😂

They were 100% using AI for chart notes, it's very common nowadays. However they're SUPPOSED to review for accuracy, but a lot of them don't. It's the bane of my existence as a medical coder.

Our AI note translator translated that a patient has a hot mama instead of a heart murmur. Patients mama was indeed hot but thankfully never asked for a history before the error was picked up.

Here in Sweden, this brings exactly one company to mind. Oracle.

Oracle are the masterminds of the disastrous "Millenium" patient journal system. Millenium is best known for being so incredibly bad that healthcare workers literally protested outside hospitals to try to stop it from being rolled out due to patient safety fears.

The same two regions of Sweden who were at the epicentre of the Millenium scandal - Västra Götaland and Skåne - were reported in 2024 to have integrated a service called Oracle Clinical Digital Assistant, which has since been renamed Oracle Health Clinical AI Agent.

Immediately afer capturing and analyzing a conversation between you and your patient, Oracle Health Clinical AI Agent automatically generates a structured note for you.

Good luck out there everyone!